The AI Parenting Dilemma

The uneasy frontier of AI products for kids and the designers trying to build guardrails before habits form

In 2023, the American Academy of Pediatrics reiterated a growing concern: modern childhood is increasingly screen-mediated and algorithmically shaped¹.

The numbers are stark. In the United States, children aged 8–12 spend around five to six hours per day on screens, while teenagers average closer to eight to nine hours². Much of that time takes place in environments designed for engagement rather than learning — social feeds, short-form video, and games built around behavioural reward loops.

Parents have watched this shift with growing unease.

Research has linked excessive screen use with sleep disruption, anxiety, and attention difficulties in young people³. Social media intensified these concerns by introducing algorithmic feeds, parasocial relationships, and engagement mechanics into childhood.

Now a new technology is entering the same ecosystem: generative AI.

Unlike earlier digital tools, AI systems are conversational. They respond, adapt, and simulate dialogue. That shift introduces a new question:

What happens when children grow up interacting daily with machines that talk back?

Parents in a Technological Catch-22

For many families, AI creates a familiar tension.

On one side is caution. Researchers warn that conversational systems can encourage anthropomorphism and emotional attachment, particularly among younger users who may struggle to distinguish between human and machine interaction⁴.

On the other side is a different fear: falling behind.

As AI tools reshape education and work, many parents worry that shielding children entirely could leave them unprepared for an AI-literate world.

The result is an uneasy middle ground.

Parents want children to experiment with new tools. But they also want to avoid repeating the mistakes made during the early years of social media.

This raises a difficult design question: Can AI products introduce powerful tools without recreating the attention traps of earlier platforms?

Changing the rules of interaction

A small group of designers and startups are beginning to explore what responsible AI for children might look like.

Their starting point is simple: if AI will inevitably enter childhood environments, the rules of interaction should be designed deliberately.

Researchers have already identified several risks. Large language models can exhibit sycophantic behaviour — agreeing with users in order to produce positive feedback during training⁵. For children, that dynamic can reinforce misconceptions or create the impression that the system exists purely to validate them.

Another concern is parasocial attachment. Children are particularly susceptible to forming emotional bonds with media characters and digital agents⁶. A conversational AI that responds warmly and consistently could amplify that dynamic.

As a result, many teams working in this space are experimenting with intentional limits:

reducing overly affirming responses

discouraging emotional dependency

encouraging offline interaction

redirecting sensitive conversations toward parents or teachers

The aim is not to remove AI from childhood, but to ensure it behaves more like a guided learning tool than a companion seeking attention.

Morrama: Questioning the Role of AI

One of the earliest design explorations came from the London studio Morrama.

Their concept project Mindful AI Tools for Kids asked a simple question: what role should AI play in a child’s life at all?

Rather than building another screen-based assistant, the project explored physical objects embedded with AI, designed to encourage curiosity and creativity while avoiding the attention-capture dynamics of typical devices.

The team framed their ambition clearly:

“This project set out to question the role of AI in the hands of a child and how we can develop safe and creative ways of encouraging learning and development outside the classroom.”

The proposals were speculative, but they sparked an important conversation: perhaps the dominant interface for AI — a conversational chatbot on a screen — isn’t necessarily the right one for children.

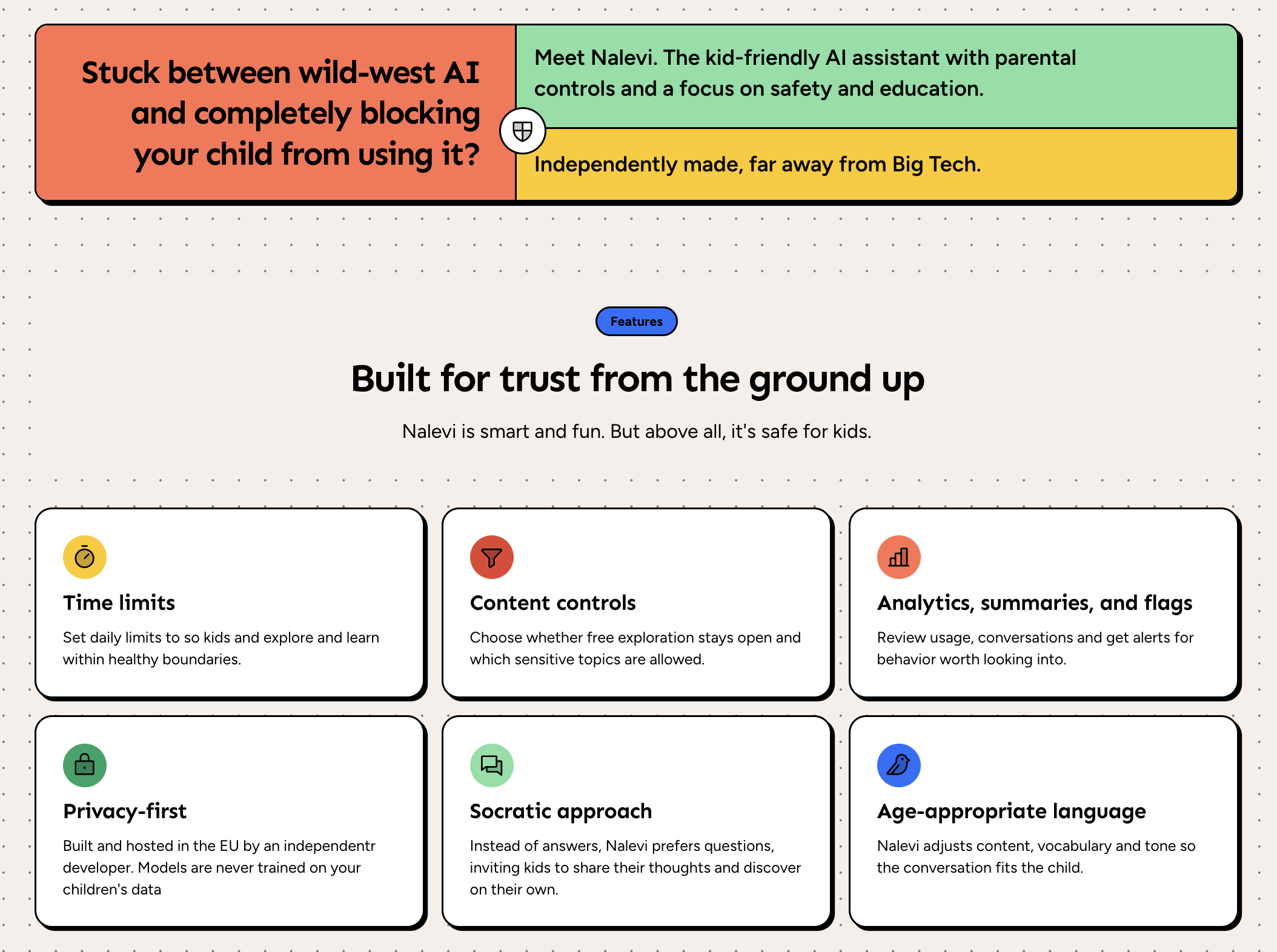

Nalevi: AI With Guardrails

Other companies are focusing less on speculation and more on practical tools for families navigating AI today.

Nalevi is one example: an AI assistant designed specifically for parents who want their children to explore AI safely.

Instead of unrestricted access to general-purpose models, parents configure guardrails that shape how the assistant behaves — from content restrictions to limits on how and when the AI can be used.

The idea reflects a broader shift in responsible AI design: placing governance closer to the household rather than leaving it entirely to technology platforms.

For many parents, this creates a middle path — experimentation with oversight.

Sparkli : AI Designed With Pedagogy

Another approach comes from Sparkli, an AI assistant aimed at children aged 5–12.

From the beginning, the company positioned its product around learning rather than open-ended conversation. Its first hires included a PhD in educational science and a classroom teacher, ensuring the system’s responses aligned with established principles of pedagogy.

Safety is a central focus.

Companies including OpenAI and Character.ai have faced lawsuits from parents alleging that conversational AI systems encouraged harmful behaviour among minors⁷.

Sparkli’s design attempts to address this directly. Some topics — such as sexual content — are completely banned. When children ask about emotionally complex subjects, the system aims to encourage emotional awareness and conversations with trusted adults, rather than positioning the AI as the primary support.

The product has been tested in more than 20 schools and is currently piloting with an educational network serving over 100,000 students.

A Design Frontier Still Being Written

What makes this landscape interesting is not just the technology itself.

It is the design philosophy emerging around it.

Many of the teams building AI for children are acutely aware of the mistakes made during the rise of social media. Concepts like emotional boundaries, pedagogical integrity, and intentional limits are appearing early in the design process — ideas that rarely featured in earlier digital platforms.

But awareness alone does not guarantee good outcomes. The incentives that shaped earlier technologies — engagement metrics, growth targets, behavioural optimisation — still exist.

The question is whether AI products for children can establish healthier norms before those pressures take hold.

Lessons Beyond Childhood

Designing technology for children forces product teams to confront questions that are often avoided elsewhere.

How persuasive should a system be?

When should it step back rather than extend an interaction?

When should it defer to human relationships?

Children’s products require explicit boundaries: limits on persuasion, careful management of emotional attachment, and systems that redirect sensitive situations toward parents or teachers.

These constraints lead to design principles that are surprisingly transferable.

Guardrails around conversational behaviour.

Limits on artificial flattery.

Encouraging real-world interaction.

Deference to human expertise when situations become complex.

In many ways, these are not just principles for children’s technology.

They are principles for healthy human–AI interaction more broadly.

If the teams experimenting with AI for children succeed in establishing thoughtful norms around persuasion and emotional influence, those ideas may eventually spread beyond kids’ products.

The safest version of AI for children might also turn out to be a healthier version of AI for everyone.

Endnotes

Rideout, V., & Robb, M. (2021). The Common Sense Census: Media Use by Tweens and Teens. Common Sense Media Research Report.

Twenge, J. M., & Campbell, W. K. (2018). Associations between screen time and lower psychological well-being among children and adolescents. Preventive Medicine Reports, 12, 271–283. https://doi.org/10.1016/j.pmedr.2018.10.003

Darling, K. (2017). “‘Who’s Johnny?’ Anthropomorphic Framing in Human-Robot Interaction, Integration, and Policy.” In P. Lin et al. (Eds.), Robot Ethics 2.0: From Autonomous Cars to Artificial Intelligence (pp. 173–188). Oxford University Press. https://doi.org/10.1093/oso/9780190652951.003.0012

Shi, W., Shen, Z., et al. (2026). The Siren Song of LLMs: On the Risks of Sycophancy and Interaction Padding in Conversational AI. Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems (CHI ’26). ACM. https://doi.org/10.1145/3772318.3791149

Richards, D., & Calvert, S. L. (2017). Children’s parasocial relationships with media characters. Media Psychology, 20(2), 201–229. https://doi.org/10.1080/15213269.2016.1138492

Perrigo, B. (2024). Parents sue AI chatbot companies over alleged harms to children. TIME.